Moonshot's Kimi K2: A Comprehensive Review of the Open-Source AI Model That Rivals GPT-4

Updated: July 13, 2025

The Open-Source AI Revolution Gets Real

Picture this: An AI model that doesn't just answer your questions but rolls up its sleeves and gets to work. That's exactly what Moonshot AI has delivered with Kimi K2, and this Kimi K2 review will show you why the AI landscape just shifted dramatically.

For years, we've watched as proprietary models like GPT-4 and Claude dominated the AI space, keeping their architectures locked behind corporate walls. Now, Moonshot AI has thrown down the gauntlet with Kimi K2—a massive 1 trillion parameter model that's not just competitive with the giants, but actually beats them at their own game in several key areas.

What makes this release particularly fascinating? Kimi K2 isn't just another large language model. It's what Moonshot calls "Open Agentic Intelligence"—a model designed from the ground up to take action, not just provide answers. With 32 billion active parameters in its Mixture-of-Experts architecture, it represents a new breed of AI that's both powerful and accessible.

The timing couldn't be better. As developers and researchers increasingly demand transparency and control over their AI tools, Kimi K2 arrives as a fully open-source alternative that doesn't compromise on performance. Whether you're building complex coding assistants, automating workflows, or tackling mathematical proofs, this model promises to deliver enterprise-grade capabilities without the enterprise-grade restrictions.

In this comprehensive review, we'll dissect everything that makes Kimi K2 tick—from its innovative MuonClip optimizer to its stunning benchmark results that have the AI community buzzing. You'll discover why this open-source AI model might just be the GPT-4 alternative you've been waiting for.

Technical Deep Dive: Understanding the Engineering Marvel

The Mixture-of-Experts Architecture Explained

Let's start with what makes Kimi K2 fundamentally different from traditional AI models. Imagine a massive corporation where, instead of routing every decision through the CEO, you have specialized departments that handle specific tasks. That's essentially how the Mixture-of-Experts (MoE) architecture works.

Kimi K2 boasts 1 trillion total parameters—a mind-boggling number that would typically require enormous computational resources. But here's the clever part: only 32 billion parameters are active at any given time. Think of it like having a thousand specialists on staff, but only calling in the relevant experts for each specific task. This design allows Kimi K2 to maintain the knowledge capacity of a massive model while keeping computational costs manageable.

The model intelligently routes inputs to the most relevant "experts" within its architecture. When you ask about coding, it activates the parameters most skilled in programming. Switch to mathematics, and different experts take the lead. This dynamic routing is what enables Kimi K2 to punch above its weight class, competing with models that have far more active parameters.

The MuonClip Optimizer: Solving the Stability Challenge

Here's where things get really interesting from a technical perspective. Traditional AI models typically use the AdamW optimizer—think of it as the reliable sedan of the optimization world. Moonshot's previous work showed that their Muon optimizer was like upgrading to a sports car, delivering significantly better token efficiency during training.

But there was a problem. When scaling up to Kimi K2's size, the training process would occasionally experience what engineers call "exploding attention logits"—essentially, the model would have random meltdowns during training, like a high-performance engine overheating.

Enter MuonClip, Moonshot's innovative solution. Using a technique called qk-clip, it acts like an intelligent governor on that high-performance engine. The system continuously monitors attention patterns and automatically adjusts when things start getting unstable. The result? Kimi K2 was trained on 15.5 trillion tokens with zero training spikes—a remarkable achievement that demonstrates both the power and stability of this approach.

Agentic Capabilities: From Answering to Acting

What truly sets Kimi K2 apart is its focus on agentic intelligence. While most language models are designed to be knowledgeable assistants, Kimi K2 was built to be a capable colleague. You can explore the official Kimi K2 documentation to understand its full capabilities.

The secret sauce involves two key innovations:

Large-Scale Agentic Data Synthesis: Moonshot developed a comprehensive pipeline that simulates real-world tool-using scenarios at massive scale. They created hundreds of domains with thousands of tools, then generated AI agents with diverse tool sets to interact in these environments. It's like training a pilot not just on theory, but in thousands of different flight simulators with varying conditions.

General Reinforcement Learning: Here's where Kimi K2 breaks new ground. Traditional reinforcement learning works great for tasks with clear right/wrong answers (like math problems). But what about subjective tasks like writing a research report? Kimi K2 uses a self-judging mechanism where the model acts as its own critic, continuously improving its ability to evaluate and refine its own work.

This combination means Kimi K2 doesn't just tell you how to analyze salary data—it actually loads the dataset, runs statistical tests, creates visualizations, and delivers a complete analysis. It's the difference between a consultant who gives advice and one who rolls up their sleeves and does the work.

Benchmark Analysis: Where the Rubber Meets the Road

Coding Performance That Turns Heads

Let's talk numbers, because in the AI world, benchmarks are where models prove their worth. In this section of our Kimi K2 review, the results are genuinely impressive.

On SWE-bench Verified—the gold standard for evaluating AI coding abilities—Kimi K2 achieved a 65.8% success rate on single attempts. To put this in perspective, GPT-4.1 scores 54.6%, and even the highly regarded DeepSeek V3 manages only 38.8%. When allowed multiple attempts, Kimi K2 pushes this to an astounding 71.6%.

But what does this mean in practical terms? SWE-bench tests whether an AI can fix real bugs in real software projects. A 65.8% success rate means Kimi K2 can successfully diagnose and patch roughly two out of three software bugs it encounters—performance that rivals many human developers. For teams looking to enhance their development workflow, this makes Kimi K2 an excellent addition to your AI developer tools arsenal.

LiveCodeBench v6 tells a similar story. With a 53.7% pass rate, Kimi K2 outperforms every model tested except Claude Sonnet 4. This benchmark focuses on contemporary coding challenges, testing the model's ability to write functional code from scratch rather than just fix existing code.

Mathematical Reasoning: A STEM Powerhouse

The AIME (American Invitational Mathematics Examination) results showcase Kimi K2's mathematical prowess. Scoring 69.6% on AIME 2024 and 49.5% on AIME 2025, it demonstrates exceptional ability in competition-level mathematics. For context, these are problems that challenge the brightest high school mathematics students in the United States.

What's particularly noteworthy is how Kimi K2 maintains strong performance across different mathematical domains. On GPQA-Diamond—a benchmark focusing on graduate-level science questions—it achieves 75.1%, surpassing both GPT-4.1 (66.3%) and Gemini 2.5 Flash (68.2%).

The model's 97.4% accuracy on MATH-500 is especially remarkable. This benchmark tests fundamental mathematical reasoning across algebra, geometry, probability, and number theory. Such near-perfect performance indicates that Kimi K2 has genuinely internalized mathematical concepts rather than merely pattern-matching.

Tool Use: Where Agentic AI Shines

The Tau2-bench results reveal Kimi K2's sophisticated tool-use capabilities. Scoring 70.6% on retail tasks, 56.5% on airline operations, and 65.8% on telecom scenarios, it demonstrates versatility across different business domains. These aren't simple query-response tests—they require the model to understand context, select appropriate tools, and execute multi-step workflows.

On ACEBench, which specifically evaluates English-language tool use, Kimi K2 achieves 76.5% accuracy. While slightly behind GPT-4.1's 80.1%, it outperforms most other models, including Claude Sonnet 4 (76.2%) and Gemini 2.5 Flash (74.5%).

Real-World Applications: From Benchmarks to Business Value

Transforming Data Analysis Workflows

One of the most compelling demonstrations of Kimi K2's capabilities involves salary data analysis. Given a dataset and a complex statistical question, the model doesn't just suggest an approach—it executes the entire analysis pipeline. In the documented example, Kimi K2:

- Loads and filters the dataset for relevant years

- Creates sophisticated visualizations with consistent color palettes

- Performs statistical tests to identify interaction effects

- Generates publication-ready plots and interpretations

This isn't theoretical capability—it's practical, actionable intelligence that can transform how businesses approach data analysis. Instead of hiring separate analysts and programmers, teams can leverage Kimi K2 to handle the entire workflow.

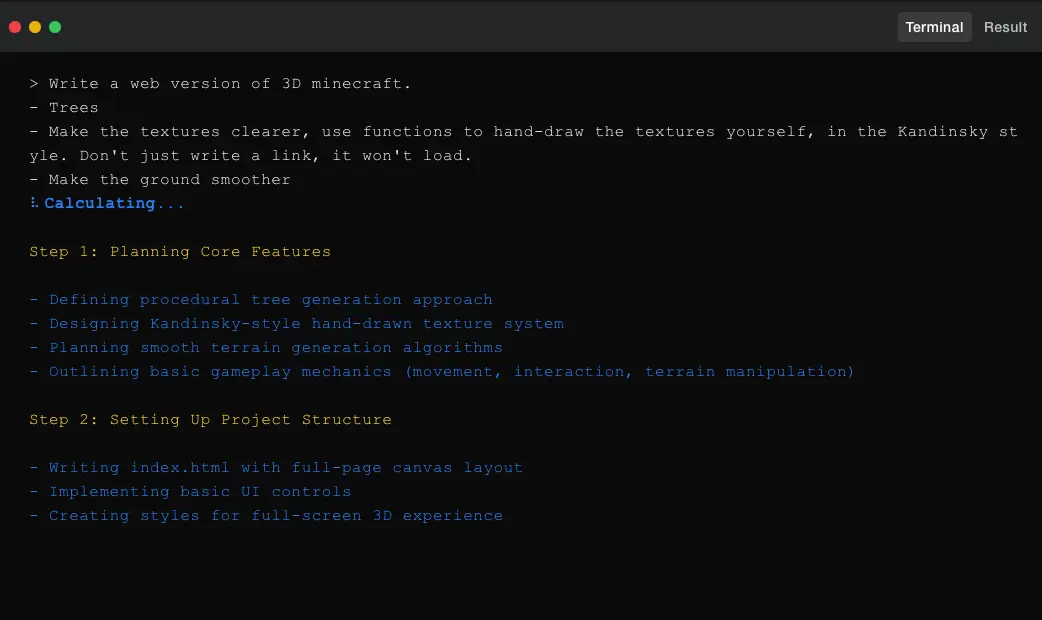

Command-Line Mastery and Development Automation

Kimi K2's integration with terminal environments opens up powerful automation possibilities. The model can:

- Navigate file systems and edit code directly

- Run test suites and debug failures

- Refactor entire codebases (like converting Flask applications to Rust)

- Generate performance benchmarks and optimization reports

Consider the Minecraft development example from the documentation. Kimi K2 autonomously manages rendering, runs test cases, captures failure logs, and iteratively improves code until all tests pass. This level of autonomous development assistance was previously the realm of science fiction.

Building Intelligent Applications with MCP

The Model Context Protocol (MCP) support, while still in development for the web interface, promises to revolutionize how we build AI-powered applications. Developers can create tools that Kimi K2 automatically understands and uses, enabling:

- Custom business logic integration without complex prompt engineering

- Seamless connection to proprietary databases and APIs

- Industry-specific tool sets for specialized domains

- Multi-step workflows that adapt based on intermediate results

Deployment Flexibility for Every Scale

Unlike proprietary models that lock you into specific platforms, Kimi K2 offers multiple deployment options:

Cloud API Access: Through platform.moonshot.ai, developers get OpenAI/Anthropic-compatible endpoints, making migration straightforward. This approach suits teams wanting immediate access without infrastructure overhead.

Self-Hosted Solutions: For organizations requiring data sovereignty or custom configurations, Kimi K2 supports deployment on:

- vLLM for high-throughput serving

- SGLang for efficient batch processing

- KTransformers for Kubernetes environments

- TensorRT-LLM for NVIDIA-optimized inference

This flexibility means whether you're a startup experimenting with AI or an enterprise with strict compliance requirements, there's a deployment path that fits your needs.

Competitive Analysis: David Among Goliaths

Kimi K2 vs GPT-4: The Open-Source Uprising

When comparing Kimi K2 to GPT-4, the results challenge conventional wisdom about open-source models. In several key areas, this Kimi K2 review reveals superiority:

Strengths over GPT-4:

- Higher single-attempt success rate on SWE-bench Verified (65.8% vs 54.6%)

- Better performance on LiveCodeBench v6 (53.7% vs 44.7%)

- Superior mathematical reasoning on AIME 2024 (69.6% vs 46.5%)

- Completely open architecture enabling customization and fine-tuning

Where GPT-4 maintains an edge:

- Broader general knowledge (SimpleQA: 42.3% vs 31.0%)

- More consistent performance across diverse tasks

- Mature ecosystem and extensive documentation

- Built-in multimodal capabilities (vision support coming to Kimi K2)

Kimi K2 vs Claude: The Agentic Advantage

Claude models, particularly Opus 4 and Sonnet 4, represent Kimi K2's strongest competition. The comparison reveals interesting trade-offs:

Kimi K2 advantages:

- Open-source availability vs proprietary restrictions

- Stronger mathematical performance in competition problems

- More efficient active parameter usage (32B vs Claude's larger active set)

- No usage limits or API costs for self-hosted deployments

Claude's strengths:

- Slightly better performance on complex reasoning tasks

- More polished conversation abilities

- Extended context handling

- Established safety measures and content filtering

Kimi K2 vs DeepSeek V3: Open-Source Siblings

The comparison with DeepSeek V3 is particularly relevant since both are open-source MoE models. Kimi K2's advantages are substantial:

- Nearly double the performance on SWE-bench Verified

- Significantly better tool-use capabilities

- More stable training process thanks to MuonClip

- Superior agentic features out of the box

The OpenAI OSU Factor

Interestingly, the open-source AI landscape is about to get even more competitive. OpenAI is reportedly planning to launch their own open-source model (OSU) in July 2025, though rumors suggest it's been delayed due to a significant issue discovered just before release that requires retraining.

What we know about OpenAI's OSU model:

- It's reportedly much smaller than Kimi K2 (significantly less than 1 trillion parameters)

- Despite its smaller size, it's rumored to be "super powerful"

- The delay suggests OpenAI is taking the open-source release seriously

This development validates Moonshot's strategy with Kimi K2. By releasing a truly capable open-source model now, they've established themselves as leaders in the open AI movement before even OpenAI enters the space. It'll be fascinating to see how "she" (as insiders refer to the OSU model) compares to Kimi K2 when eventually released.

Use Case Recommendations

Based on our comprehensive analysis, here's when to choose each model:

Choose Kimi K2 when:

- Building autonomous coding assistants or debugging tools

- Requiring self-hosted deployment for data sensitivity

- Developing mathematical or scientific applications

- Creating custom agentic workflows with specific tools

- Needing cost-effective scaling without API limitations

Consider alternatives when:

- Requiring cutting-edge conversational abilities (Claude)

- Needing proven enterprise support and SLAs (GPT-4)

- Working with multimodal inputs immediately (GPT-4, Gemini)

- Prioritizing broad general knowledge over specialized capabilities

Limitations and Considerations

Current Constraints

While impressive, Kimi K2 isn't without limitations. During testing, several issues emerged:

Token Generation Challenges: On particularly complex reasoning tasks or when tool definitions are unclear, the model sometimes generates excessive tokens, leading to truncated outputs. This can be mitigated with careful prompt engineering but requires attention.

Performance Degradation with Tools: Interestingly, enabling tool use can sometimes reduce performance on certain tasks. The model may over-rely on tools when a direct answer would suffice.

One-Shot vs Agentic Framework: When building complete software projects, using Kimi K2 with simple one-shot prompting shows performance degradation compared to utilizing it within a proper agentic framework. This means developers need to invest in setting up appropriate scaffolding for complex tasks.

Vision Support: Currently, Kimi K2 lacks vision capabilities, putting it at a disadvantage for multimodal applications. Moonshot has indicated this is coming in future releases.

Technical Requirements

Running Kimi K2 locally requires substantial hardware:

- Minimum 80GB VRAM for full model inference

- Recommended: Multiple GPUs for optimal performance

- Quantized versions available for reduced hardware requirements

Conclusion: The Future is Open and Agentic

This Kimi K2 review reveals a model that challenges everything we thought we knew about the gap between open-source and proprietary AI. Moonshot AI hasn't just created another large language model—they've delivered a genuinely new category of AI that acts rather than merely responds.

For developers and researchers, Kimi K2 represents a watershed moment. The combination of state-of-the-art performance, complete architectural transparency, and true agentic capabilities opens doors that were previously locked behind corporate APIs and usage limits.

Who should adopt Kimi K2 immediately:

- Development teams building AI-powered coding tools

- Researchers requiring customizable, high-performance models

- Organizations with strict data sovereignty requirements

- Startups looking to build differentiated AI applications without API dependencies

Who should wait for future updates:

- Teams requiring immediate vision processing capabilities

- Applications needing the absolute broadest general knowledge

- Use cases requiring extensive safety filtering and content moderation

The release of Kimi K2 signals a new era in AI development—one where open-source doesn't mean compromising on capabilities. With OpenAI's delayed OSU model on the horizon, the competition in open-source AI is heating up, but Moonshot has already established a formidable lead.

Ready to experience Open Agentic Intelligence yourself? You can try Kimi K2 free at kimi.com, integrate it via API at platform.moonshot.ai, or deploy it on your own infrastructure using the models available on GitHub. The future of AI is here, and it's more open than ever.

The AI landscape just changed. The question isn't whether Kimi K2 can compete with other frontier LLMs it's what you'll build now that this level of AI capability is truly in your hands.